Responsibility & Reliability

AI must be reliable in the company. Correct content, traceable origin, and clear responsibility are crucial for AI to be accepted and productively used in everyday work. Unclear or incorrect answers have a direct impact on decisions, processes, and results.

Vimmera AI ensures the quality of results through clear principles and defined processes. The systems work with verified knowledge sources instead of free generation. Role and authorization concepts regulate who may view, use, or modify content. Validation mechanisms and continuous further development during ongoing operations ensure that the knowledge base remains current, consistent, and reliable.

This way, AI results are reproducible, traceable, and professionally accountable. Companies retain control over their information, reduce risks, and can use AI as a reliable tool in productive operations.

Reliability instead of “hallucinations”

The core of Vimmera AI solutions is a quality-assured knowledge base that combines relevant information from documents, systems, processes, experiential knowledge, and structured data. Technically, methods such as retrieval augmented generation, semantic search, context-based queries, and knowledge-based answer generation are used, among others. The AI specifically accesses verified company information, interprets it in the professional context, and generates traceable results from it, instead of inventing content freely.

A central requirement here is reliability. Vimmera AI develops systems so that incorrect answers, misunderstandings, or so-called hallucinations are largely avoided. The systems work on the basis of verified sources, with clear control mechanisms and transparent dependencies. If information is missing or uncertainties exist, there is no guessing; instead, follow-up questions are asked or it is made clear that a reliable statement is not possible. Especially in professional work environments, this is crucial for trust and quality.

Security of results

In addition to data protection, Vimmera AI focuses on the security of results. The systems deliver consistent, verifiable, and reproducible outputs, supplemented by context information, source references, and quality mechanisms. The goal is not just speed, but professionally correct, reliable, and trustworthy support in everyday work.

Security here means protecting information, minimizing risks, and at the same time ensuring stable, high-quality results. This creates AI usage that is responsible, professional, and sustainable in the long term.

Quality standards

Powerful AI does not arise from quickly providing individual tools, but from systematic work with knowledge. For professional companies, “approximately correct” is not enough. Information must be correct, complete, traceable, and reliable, especially in service, operations, law, technology, or management.

Vimmera AI therefore supports companies in systematically capturing, cleaning, supplementing, and transferring their knowledge into a reliable knowledge base. This process takes into account the individual structures, technical languages, and working methods of each organization. Solutions are not off the shelf, but are developed together with the specialist departments.

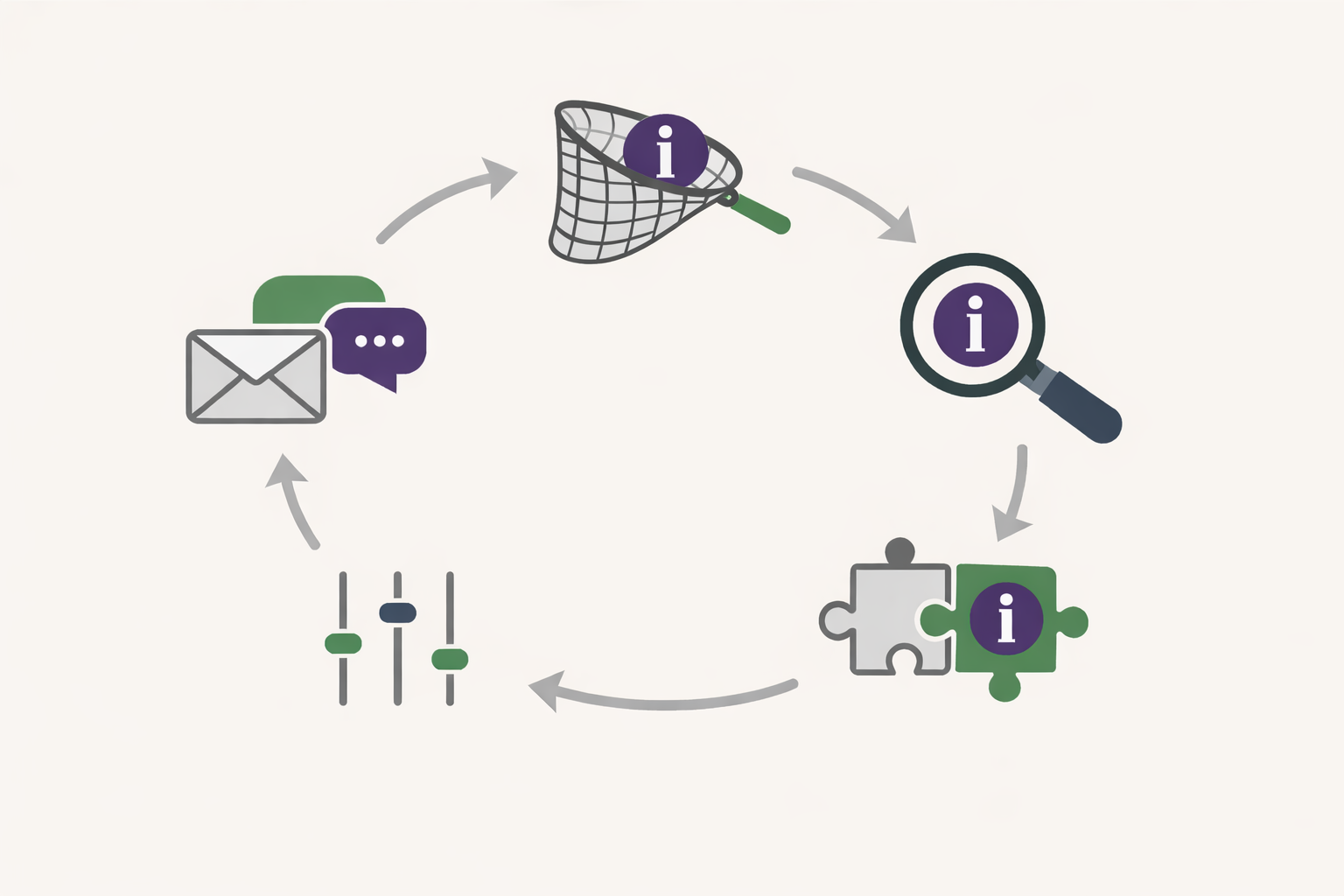

Another component of this approach is quality assurance during ongoing operations. The results of the systems are systematically checked, evaluated, and further developed. Customers are involved in this process and receive tools to analyze and specifically improve answers, content, and recommendations. This creates a continuous improvement cycle from practice, feedback, and system development.

This is how Vimmera AI ensures that AI not only works, but also consistently delivers the quality required for productive use in companies.